Behavior Foundation Model

Research Scientist & Team Lead at Shanghai AI Lab

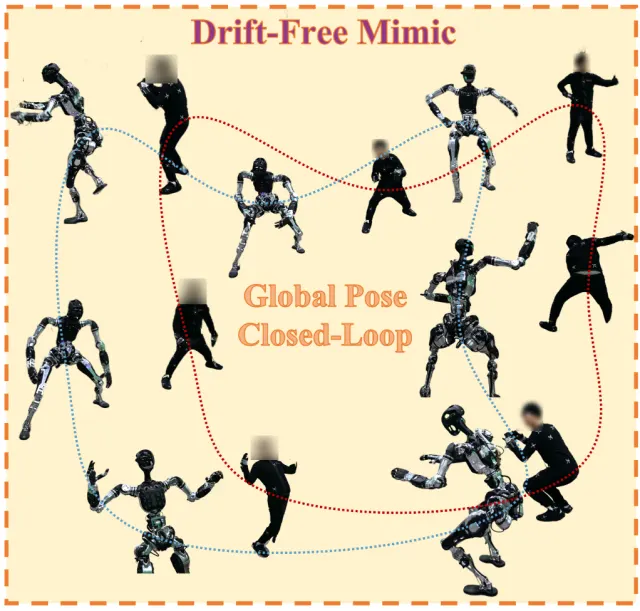

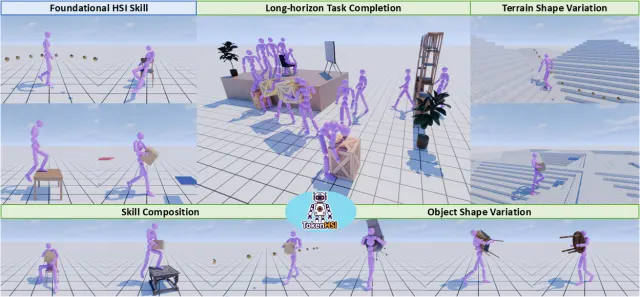

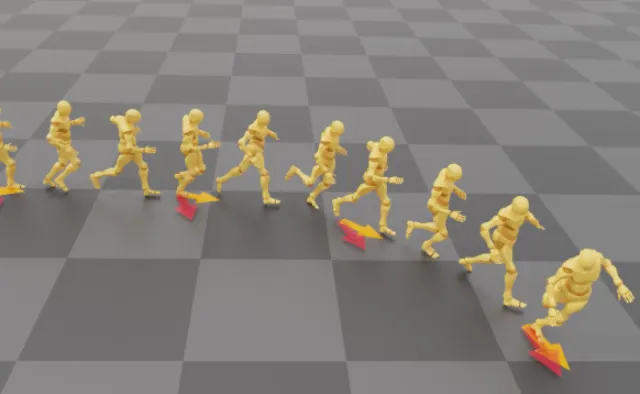

Building generalizable humanoid intelligence through physics-based simulation

I build generalizable humanoid intelligence through physics-based simulation, embodied learning, and sim-to-real transfer.

I am a research scientist at Shanghai AI Lab working on embodied intelligence, humanoid robotics, and physics-based simulation. My research focuses on generalization-oriented humanoid skill learning and sim-to-real transfer. I obtained my PhD from The Chinese University of Hong Kong in 2023, advised by Prof. Dahua Lin.

My work has been published in top-tier venues including CVPR, ICML, ICCV, ECCV, SIGGRAPH, NeurIPS, and ICRA, with several oral, spotlight, and highlight presentations. My publications have been cited more than 10,000 times. I am a recipient of the WAIC 2025 Rising Star Award and winner of the COCO 2018 Panoptic Segmentation Challenge.

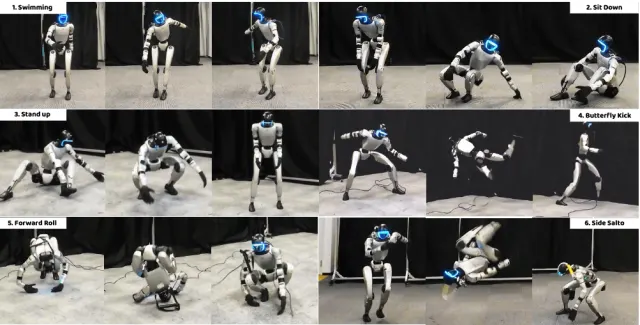

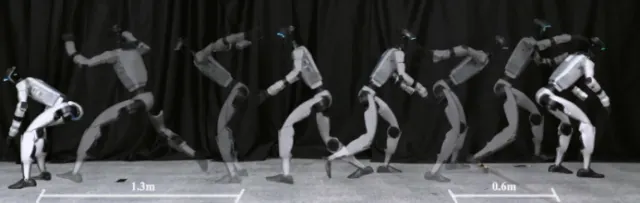

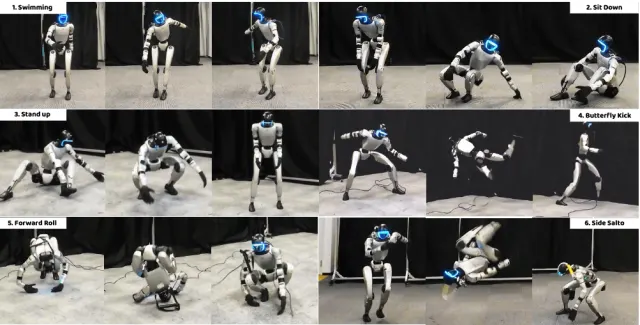

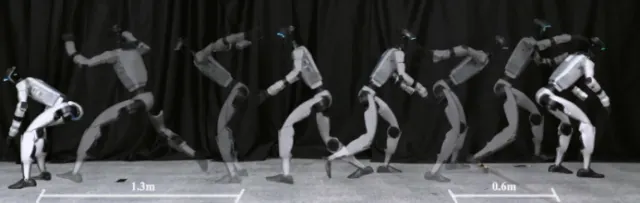

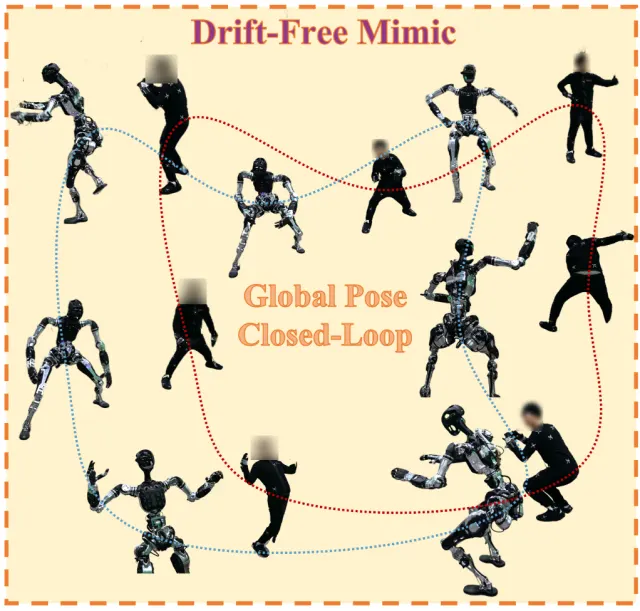

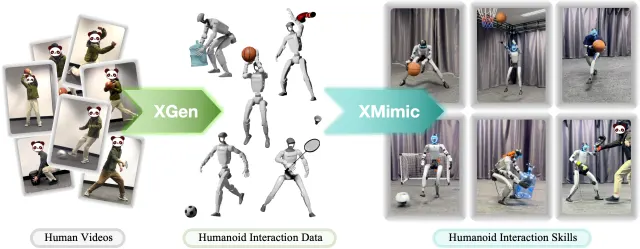

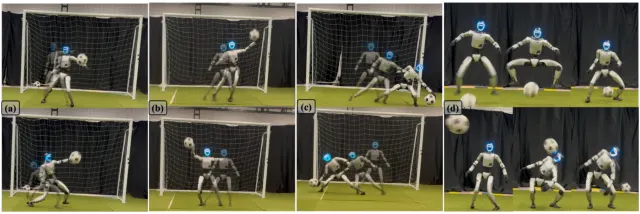

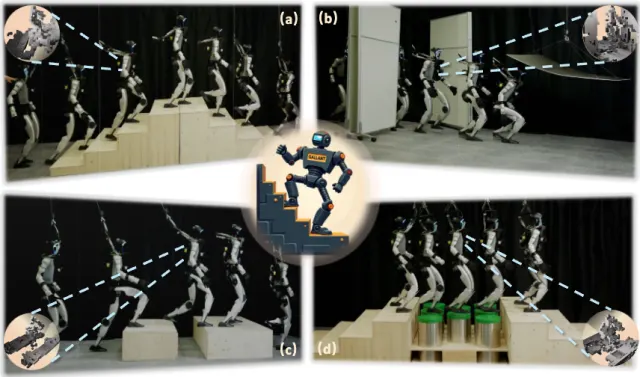

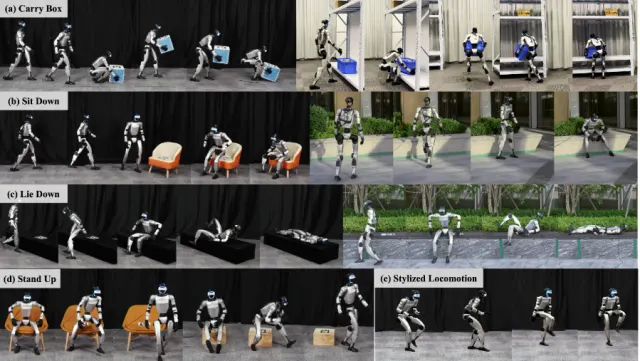

Humanoid control, locomotion, athletic skills, and character animation systems.

Research roles across embodied AI, simulation, and robotics systems.

Academic background in digital humans, intelligence science, and information science.